How to Revolutionize Community Search with Hybrid Retrieval and Automated Evaluation

Learn to transform community search by combining lexical and semantic retrieval, reducing effort tax, and enabling validation. A step-by-step guide.

Introduction

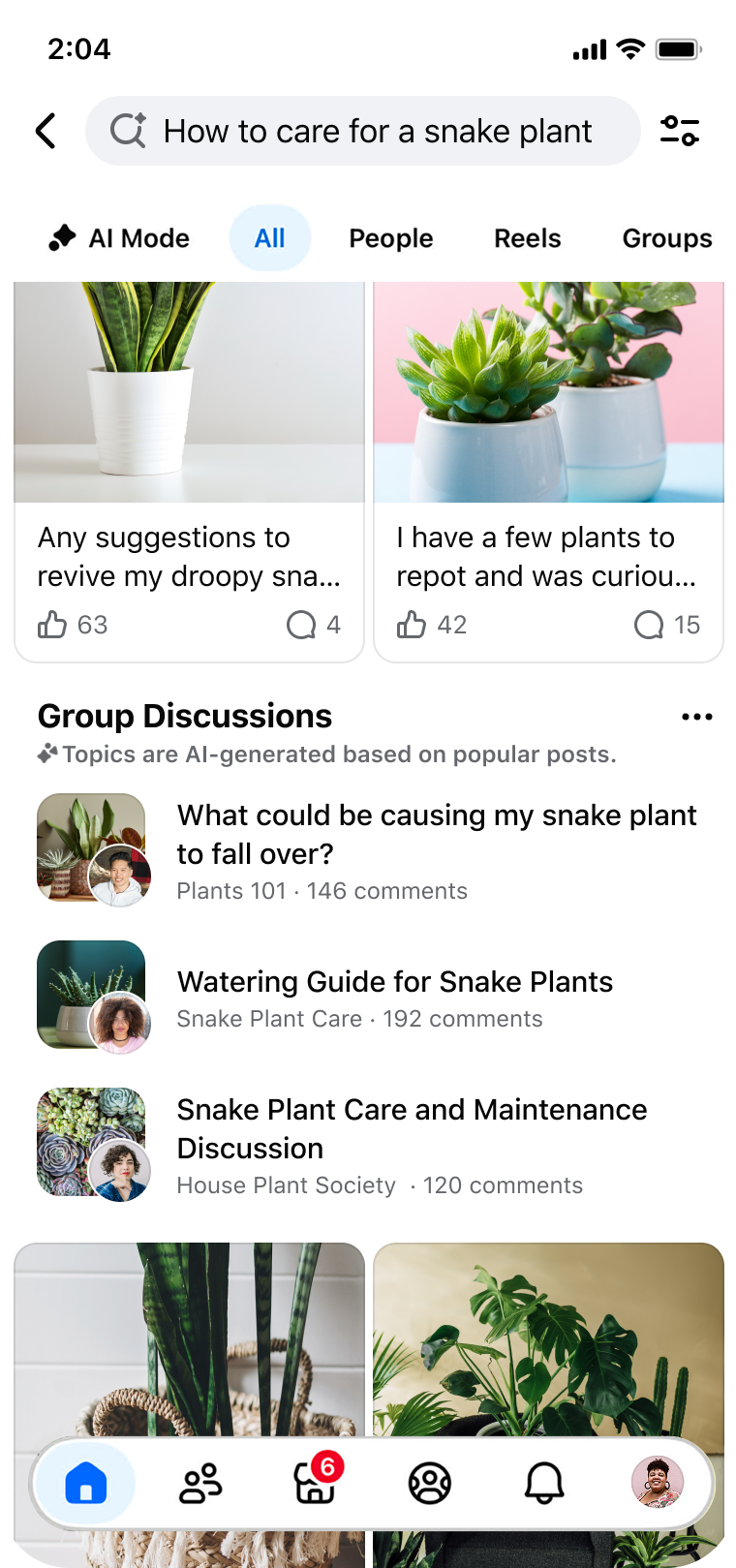

Modern communities are treasure troves of collective knowledge, but finding the right information often feels like searching for a needle in a haystack. Traditional keyword-based search fails to understand user intent, causing friction in discovery, consumption, and validation. This guide walks you through a proven approach inspired by Facebook Groups Search modernization: adopting a hybrid retrieval architecture and implementing automated model-based evaluation. By following these steps, you can help users discover relevant content effortlessly, reduce the effort needed to digest information, and empower them to validate decisions with community wisdom.

What You Need

- Text corpus: A dataset of community posts, comments, and discussions (e.g., forum threads, group messages).

- Lexical search engine: e.g., Elasticsearch, Solr, or Apache Lucene for keyword matching.

- Embedding model: A semantic embedding model (e.g., BERT, SBERT, or any modern sentence transformer) to capture meaning beyond exact words.

- Hybrid retrieval framework: A system that combines lexical and semantic search, such as reciprocal rank fusion or learned weighting.

- Evaluation pipeline: Tools to run automated model-based evaluations (e.g., using relevance labels or human judgment proxies).

- Computing resources: Sufficient CPU/GPU for embedding generation and model inference.

- Developer team: Engineers familiar with NLP, search systems, and evaluation methodologies.

Step-by-Step Guide

Step 1: Identify Friction Points in User Journeys

Start by analyzing how users currently search and consume community content. Map the three key friction points: discovery (finding relevant posts despite mismatched vocabulary), consumption (scrolling through long comment threads to extract consensus), and validation (verifying decisions using scattered community expertise). For example, note when users search for “small individual cakes with frosting” but the community calls them “cupcakes.” Document these cases to guide your design.

Step 2: Implement a Hybrid Retrieval Architecture

Move beyond pure lexical search by combining keyword matching with semantic understanding. Set up two parallel search systems: a lexical index that matches exact words (handling synonyms via a thesaurus or query expansion) and a semantic index that uses embeddings to match meaning. When a user searches for “Italian coffee drink,” the lexical system might find posts with “espresso” if phrase is indexed, while the semantic system will retrieve posts about “cappuccino” even if the word “coffee” is absent. Use a fusion algorithm—such as reciprocal rank fusion or a learned linear combination—to blend results from both systems. This ensures that relevant content surfaces even when language diverges.

Step 3: Optimize for Consumption with Summarization and Ranking

To reduce the “effort tax” users face when reading long comment threads, introduce a summarization step. After retrieving a posts, extract key comment snippets or generate a consensus summary using a text summarization model (e.g., T5 or BART). Additionally, re-rank the comments within a post based on relevance and popularity signals (e.g., likes, reply count, timeliness). For a query like “tips for taking care of snake plants,” the system should present a distilled list of best practices rather than forcing the user to wade through dozens of comments.

Step 4: Enable Validation Through Community Context Aggregation

Users often need to validate a decision—like buying a vintage Corvette on a marketplace—but valuable advice is buried across multiple group discussions. Build a dedicated “validation view” that aggregates community knowledge for a given topic or product. Use entity linking (e.g., identifying “Corvette” mentions) and cluster related discussions. Then, using the hybrid retrieval system, pull up the most authoritative and diverse opinions. Display these in a structured format (e.g., pros/cons, top advice) to help users make informed decisions.

Step 5: Automate Model-Based Evaluation

Set up an automated pipeline to measure search quality without manual labeling for every change. Use a model (like a fine-tuned BERT for relevance scoring) to judge the top retrieved results against a held-out set of queries or canonical answers. Run this evaluation after every modification to the retrieval or ranking pipeline. Monitor metrics such as Mean Reciprocal Rank (MRR), Recall, and NDCG. The Facebook approach reported improved engagement and relevance with no increase in error rates by using this automated evaluation to guide iterations.

Step 6: Iterate Based on Feedback and Metrics

Treat the system as a living product. Collect user feedback through A/B testing (e.g., comparing click-through rates, time on page, or follow-up search rates). Use the automated evaluation model to flag regressions. For example, if semantic search starts returning unrelated results, adjust the embedding model weights or add domain-specific fine-tuning. Continuously refine the hybrid fusion parameters to balance between lexical precision and semantic recall.

Step 7: Scale and Monitor for New Languages and Domains

If your community spans multiple languages, extend the embedding model to support cross-lingual retrieval (using multilingual models like multilingual BERT). Monitor the system’s performance on new topics by adding them to your evaluation set. Ensure that the lexical index updates frequently to capture new community jargon (e.g., “Covid” vs “coronavirus”). The goal is to maintain the same low error rates while scaling to millions of posts.

Tips for Success

- Start small: Pilot your hybrid retrieval on a single, well-understood community before rolling out widely. This allows you to tune parameters without overwhelming your team.

- Use real user queries: Base your evaluation queries on actual search logs—not synthetic ones—to capture genuine language gaps.

- Involve community members: Ask power users to flag particularly bad or good results; their insights can reveal edge cases your automated evaluation misses.

- Combine human and automated judgment: While automated models are efficient, periodically run human relevance judgments to validate the model’s calibration.

- Consider privacy: When aggregating community knowledge, ensure you respect user privacy and content permissions. Anonymize if necessary.

- Document your architecture: Maintain clear documentation of your hybrid retrieval setup, fusion algorithm, and evaluation criteria. This helps new team members onboard quickly and reduces technical debt.

By following these steps, you can replicate the core innovations that modernized Facebook Groups Search: a hybrid retrieval system that bridges the gap between user intent and community language, an effort-reducing consumption layer, and an automated evaluation engine that keeps quality high. Your users will no longer feel lost in translation, burdened by scrolling, or uncertain about validation—they’ll unlock the full power of community knowledge.